Like many in search engine optimization, I watch the major algorithmic updates or “boulders” roll down the hill from the Googleplex and see which of us will be knocked down. For those of us who get squashed, we stand back up, dust ourselves off, and try to assess why we were the unlucky few that got rolled. We wait for vague responses from Google or findings from the SEO community.

Like many in search engine optimization, I watch the major algorithmic updates or “boulders” roll down the hill from the Googleplex and see which of us will be knocked down. For those of us who get squashed, we stand back up, dust ourselves off, and try to assess why we were the unlucky few that got rolled. We wait for vague responses from Google or findings from the SEO community.

Panda taught us that “quality content” was of focus and even if you were in the clear sites that link to you may have been devalued, thus affecting your overall authority. My overall perception of the Penguin update was that it was designed primarily to attack unnatural link practices and web spamming techniques, as well as a host of less focused topics such as AdSense usage and internal linking queues.

Duplicate content was mentioned here as a part of meeting Google’s quality guidelines but my overall observation was that it was not mentioned by many to be a major factor in the update.

The Head Scratching Period

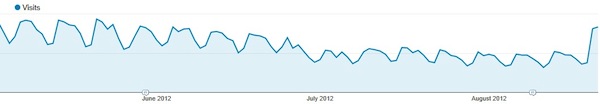

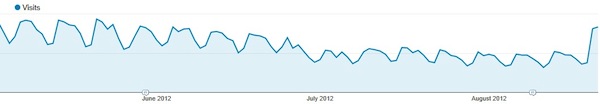

After the Penguin update of late April 2012 hit, I quickly noticed that one of my client’s traffic began to slowly lose rankings and traffic. At first, it didn’t seem to be an overnight slam by Google, but more so a slow decrease in referrals.

I soon began my post Penguin checklist and noticed that no major Penguin topics were ones that should be providing negative effects on the client site. This ultimately left me at the point of content quality.

I reviewed the content of the affected site sections. It looked fine, was informational, not keyword stuffed, and met Google’s Quality Guidelines. Or so I thought.

The Research

Time progressed and other site recommendations were placed on unaffected site areas as I tried to determine why rankings and referrals continued to fall in the aforementioned informational site areas.

I quizzed the site owner as to who developed the content originally. He stated it was himself using content found from other sites and placed on the site in the last few years.

First, I took a look at several years of organic data and noticed that they were hit very hard at the Panda rollout. Shaking my head but also glad we had found the issue we took to the site to pinpoint how much duplication was done.

Using tools such as Webconfs Similar Copy tool and Copyscape we found several site pages with either a large percentage of cross domain scraped duplication down to exact content snippets in copy originated by other sites.

The Resolution

A content writing resource worked quickly to rewrite unique copy for these pages to reduce the percentage of duplication. All of the affected pages were then released in their new unique state.

I had assumed that the recovery period may take a slow progression as the penalty in this case had come about slowly. Surprisingly, soon we saw that our pre-Penguin rankings and traffic appeared in a day’s time.

Questions

The rankings and traffic came back and are still there. After celebrating it is time for some after action review that leads to many questions including:

- Is duplicate content, scraping, all that is included in Google’s Quality Guidelines more of a factor in the Penguin update than the SEO community considered?

- If you get your pre-algorithmic update rankings back in a day, why weren’t they all lost in a day?

- I also understand that there are multiple algorithmic updates on a daily basis, but it is interesting that the ranking and traffic decline happened right during Penguin. There have been other algorithmic happenings and refreshes in the period from then to now but am used to update refreshes being a leash easement on the algorithmic update and usually you see a rankings improvement. Why did I continue to see the slow negative trend?

Ultimately, I think the above story shows that it is quite important to know where your SEO client’s or company’s content originated if it precedes your involvement with site SEO efforts.

A recent post by Danny Goodwin,”Google’s Rating Guidelines Add Page Quality to Human Reviews”, rang for a while inside my head as it reinforces that even more so we need to be mindful of our site content. This includes ensuring it is unique first and foremost but engaging, constantly refreshed and meaningful for consideration in SEO improvement.

Unknown scraping efforts are, in my opinion, more dangerous than incidental on-site content duplication via dynamic parameter usage, session ID usage, on-site copy spinning (e.g., copy variations on location pages, etc.). All of these dangerous practices knowingly or unknowingly fall into the realm of content quality and showing devotion to your site content will allow you to provide fresh and unique copy that post Caffeine (yet again, another big algorithm update) Googlebot will enjoy crawling.

Like many in

Like many in

Leave a Reply

You must be logged in to post a comment.