Way back in the “good old days” of SEO, many “SEO firms” made a pretty good living “submitting your website to thousands of search engines.” While that has never been a sound tactic/method of achieving SEO nirvana, today’s SEO provides us with opportunities to ensure that we get our content – in all shapes, sizes, and forms – indexed in the search engines, to the best of our ability.

When it comes to the crawling phase of SEO and bot visibility, we often first check what we hold from search engines via robots.txt and meta robots tag usage. But equally important is the content/URLs that we feed search engines.

Long ago, the best practice was to create an HTML sitemap of at least all your higher-level pages and link this HTML sitemap from the footer of all site pages. This allowed search engines the ability to have a buffet of site URLs from any one page on your site.

Then along came XML sitemaps. Extensible Markup Language is the preferred means of data digestion by search engines.

With this tool at our disposal, a site administrator has the ability to tell/feed search engines data on the pages of a site they want crawled as well as the priority or hierarchy of site content alongside information on when the page was last updated.

Let’s walk through the initial first steps of how to create sitemaps for varied content types.

How to Build a Standard XML Sitemap

Below is an anatomy of a standard XML sitemap URL entry.

<url>

<loc>http://www.example.com/mypage</loc>

<lastmod>2013-10-10</lastmod>

<changefreq>monthly</changefreq>

<priority>1</priority>

</url>

This points out the areas I noted above where you can provide information on URLs desired for crawl as well as additional URL information.

Some content management systems allow the functionality for dynamic or auto-generated sitemaps. Is this easy? Yes. Is it error free? No. More on that in a moment.

If you don’t have the functionality to generate a sitemap with your CMS, then you must create an XML sitemap from scratch. You wouldn’t want to do this manually because of the time burden. That’s why there are tools for this.

There are many XML sitemap generators. Some are free, but they often have a crawl cap on site URLs, so this defeats the purpose.

Most good sitemap generators are paid. One fairly straightforward tool you can use for sitemap generation is Sitemap Writer Pro. It’s well worth the $25.

If you do choose to use other tools, choose the one that allows you to review the crawl of URLs and allows you to easily remove any duplicated URLs, dynamic parameters, excluded URLs, etc. Remember, you only want to include the pages on the site that you want a search engine to index and value.

How to Upload and Submit Your Sitemap

Now that the standard XML sitemap is built, you need to upload the file to your site. This file should reside directly off the root, with a relevant page naming convention such as /sitemap.xml.

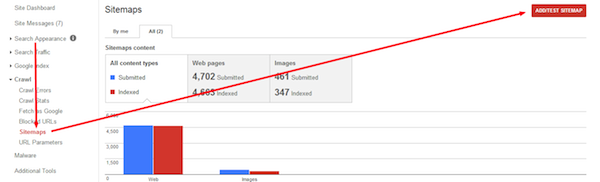

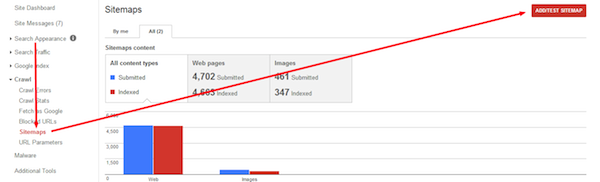

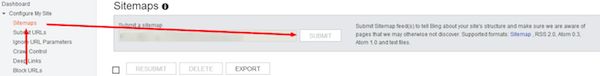

Once you’ve done this, go to Google Webmaster Tools and submit the sitemap:

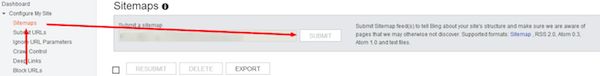

Then do the same with Bing Webmaster Tools:

Yes, they may find the sitemap on your site, but it’s smart to feed search engines this information and give Google and Bing the ability to report on indexing issues.

How to Find Sitemap Errors

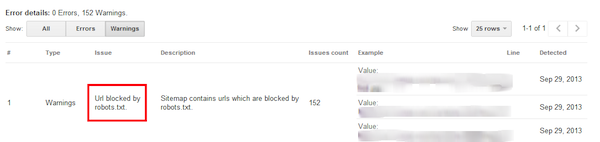

You’ve given your URLs to the top search engines in the preferred XML markup, but how are they indexing the content? Are they having any issues? The wonderful caveat of providing this information directly to Webmaster Tools accounts is that you can review what content you may be withholding from search engines by accident.

Google has done a much better job of sitemap issue transparency compared to Bing, which provides a much smaller amount of data for review.

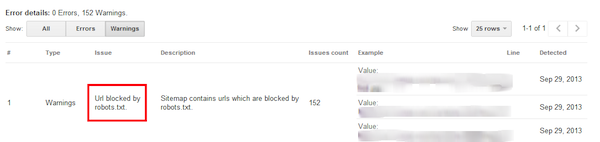

In this instance, we’ve submitted an XML sitemap and received an error that URLs in the sitemap are also featured in the robots.txt file.

It’s important to pay attention to this type of error and warning information. They may not be able to even read the XML sitemap. And, we can also glean information on what important URLs we are accidently withholding from crawls in the robots.txt file.

As a follow-up to the point above, on the negative aspect of dynamically-generated sitemaps, these can often include many URLs that are excluded from search engine view intentionally in the robots.txt file. The last thing we want to do is tell a search engine to both crawl and not crawl the same page at the same time.

Sitemap monitoring is essential for any SEO initiative. At its most basic point, it will tell you how many URLs in your XML sitemap you have provided them, how many are currently indexed in Google, as well as the last time the sitemap file was processed.

Wash, Rinse, Repeat

You may have run through process above and are feeling pretty confident about transparency and delivery of site URLs to the search giants. But aside from the standard XML sitemap information, you can provide to Google and Bing, these engines also will accept information on your site’s image, video, news and mobile content.

Conveniently, these types of sitemaps can be created, placed on the site and submitted in the same fashion as the standard XML sitemap. Additionally, using the preferred tool I mentioned earlier, you’ll also have the ability/functionality to create these sitemaps.

Anatomy of Supporting XML Sitemaps

Image XML Sitemaps

Provide data on site images and the page locations of these images:

<url>

<loc>http://www.example.com/mypage</loc>

<lastmod>2013-10-10</lastmod>

<changefreq>monthly</changefreq>

<priority>1</priority>

<image:image>

<image:loc>

http://www.example.com/images/myfirstimage.gif

</image:loc>

</image:image>

<image:image>

<image:loc>

http://www.example.com/images/mysecondimage.gif

</image:loc>

</image:image>

</url>

Video XML Sitemaps

Instruct the search engines on the page locations of your videos and video embeds as well as information on their titles, descriptions, access levels, etc.:

<url>

<loc>

http://www.example.com/mypage</loc>

<lastmod>2013-05-06</lastmod>

<changefreq>monthly</changefreq>

<priority>0.5</priority>

<video:video>

<video:content_loc>

https://youtube.com/watch?v=W10j21236%3Den_US

</video:content_loc>

<video:player_loc

allow_embed=”yes”>http://www.site.com/videoplayer.swf?video=123</video:player_loc>

<video:thumbnail_loc>

http://img.youtube.com/vi/W1021236=1/default.jpg

</video:thumbnail_loc>

<video:title>My Video Name</video:title>

<video:description>

My Video Description

</video:description>

<video:rating>2</video:rating>

<video:view_count>498</video:view_count>

<video:publication_date>2013-05-06</video:publication_date>

<video:family_friendly>yes</video:family_friendly>

<video:duration>10</video:duration>

<video:expiration_date>2016-05-06</video:expiration_date>

<video:requires_subscription>no</video:requires_subscription>

</video:video>

</url>

Mobile XML Sitemaps

Do you have mobile pages in a directory on your site? Let search engines know more about your URLs catering to mobile users:

<url>

<loc>http://www.example.com/mobile/oneofmymobilepages</loc>

<lastmod>2013-10-10</lastmod>

<changefreq>monthly</changefreq>

<priority>0.8</priority>

<mobile:mobile/>

</url>

News XML Sitemaps

News sites can provide information about news pieces, their location on the site, as well as news type, language, and access information:

<url>

<loc>http://www.example.com/news/mynewsarticle</loc>

<news:news>

<news:publication>

<news:name>My News Site</news:name>

<news:language>en</news:language>

</news:publication>

<news:access>Subscription</news:access>

<news:genres>PressRelease, Blo</news:genres>

<news:publication_date>2013-10-10</news:publication_date>

<news:title>Title of News Piece</news:keywords>

</news:news>

</url>

Conclusion

With as much effort as goes into the development of great content, especially nowadays, taking the added time of ensuring that you’ve done everything in your power to ensure full indexation is critical to getting the value back out of the effort.

Leave a Reply

You must be logged in to post a comment.