I conduct a lot of large-scale SEO audits, which can often uncover a lot of large-scale SEO problems. On sites with millions of pages indexed, technical problems can sometimes yield catastrophic results.

Even if a problem was the result of an honest mistake, dangerous SEO signals can be sent to the engines en masse. And there’s nothing worse than finding out you’re violating webmaster guidelines, when you had no idea you were breaking the rules.

What follows are a few examples of how technical problems could be sending horrible signals to the engines. And there may be some of you reading this post right now that have these problems buried on your respective websites. And if that’s the case, maybe this post can help you identify those problems, so you can fix the issues at hand.

Remember, Google and Bing can’t determine if you meant to cause the problems, or if it was just a mistake. Therefore, the ramifications could be just as serious, even if you didn’t mean to structure your site the way it is now.

1. You’re Kind of Cloaking

When performing audits, I use a number of tools for crawling websites. When analyzing those crawls, I always like to check pages with an excessive number of links on them. It’s amazing what you can find.

Sometimes my analysis reveals a number of exact match anchor text links from certain pages or sections on the site that aren’t readily visible on the page. Or worse, it’s globally present on the site (in the code, but not visible to users).

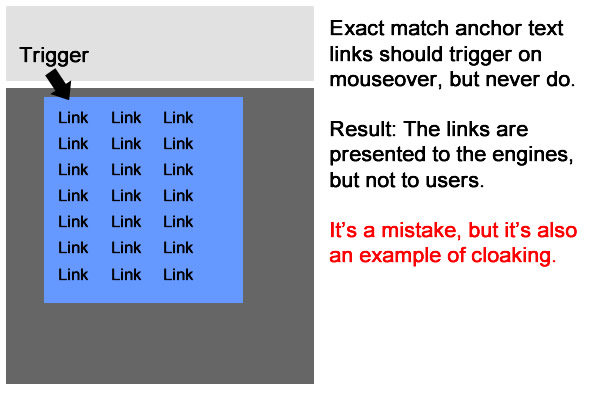

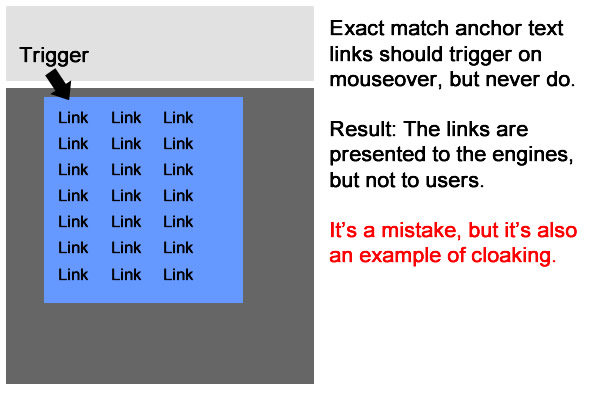

If the site is providing the engines with a set of exact match anchor text links, but not providing that content for users, then the site is technically cloaking (providing different content to the engines than to users). And cloaking violates Google’s Webmaster Guidelines.

Now, it’s one thing if a company knows it’s doing this, but there have been a number of situations where the teams I was helping had no idea they were cloaking. In those situations, technical problems led to an unknown cloaking situation.

When revealing the problem to developers, it’s typically not long before they track down the coding issue that was causing the content to be hidden to users. They simply had no idea that this was happening on certain pages, or in certain sections.

For example, during a recent audit, I found large sections of a subnavigation that wouldn’t trigger when mousing over its top-level category. The main navigation should have triggered a secondary menu with links to specific sections of the site (using rich anchor text). Instead, nothing happened when you moused over the menu. Therefore, the site was providing a relatively large number of rich anchor text links to the engines, but users never saw that content. And this was located on over 10,000 pages.

Another example I came across was a faulty breadcrumb trail that never showed up visibly, but still resided in the code. So across 2 million pages on the site, exact match anchor text links were in the code for the engines to crawl, but these couldn’t be seen or accessed by users. The site owners weren’t trying to cloak, but they were cloaking due to technical problems.

And then there’s human error. I once audited a site with an entire navigation found in the code, but users couldn’t access that content in any way. After asking my client about the error I had found, they revealed that they had decided to hide the content for usability purposes. They simply didn’t know that hiding content from users while presenting it to the engines was a bad thing.

As these examples illustrate, quasi-cloaking can manifest itself in several ways.

Recommendation: Monitor your own site on a regular basis, or have your SEO do this for you. Audits via manual analysis and crawling should be conducted at regular intervals. In addition, thorough testing on changes prior to those changes being released is critically important. That could catch technical problems from being unleashed on a site (and catch human errors). Don’t cloak by mistake. It’s easier to do than you think.

2. Your rel=canonical Becomes rel=catastrophical

Rel=canonical is such a simple line of code, but can provide quite a negative punch when not handled correctly. And that’s especially the case on large-scale sites with hundreds of thousands, or millions of pages, indexed. Providing the wrong rel=canonical strategy could send all sorts of bad signals to the engines, and could destroy rankings and organic search traffic.

Rel=canonical should be used on pages that are duplicates (contain similar or near similar content to another page), which can help the engines consolidate indexing properties to the correct URL (the canonical URL). During audits, I’ve seen the canonical URL tag botched so many times that I can’t even count anymore. And in aggregate, a poor rel=canonical strategy could fire millions of bad SEO signals to the engines, which can severely impact rankings and traffic.

For example, I audited a large site (10 million+ pages indexed) that implemented rel=canonical incorrectly during a site refresh. All of the product pages suddenly included rel=canonical that pointed to the internal search results. Then the internal search results page included the meta robots tag using noindex, nofollow. Needless to say, this caused massive SEO problems for the site. Traffic from organic search plummeted almost immediately.

Upon finding the problem, and implementing a fix, traffic began to rebound pretty quickly. It’s a great example of how one line of code could destroy SEO.

Recommendation: If you’re unsure how to best implement rel=canonical, then don’t implement it at all. And if you do want to implement the canonical URL tag (which you should), then map out a strong strategy with the help of an experienced SEO professional. Make sure you’re using rel=canonical on duplicate pages, and not trying to use it in place of 301 redirects. Make sure you are passing search equity to the right pages versus implementing some type of far-reaching rel=canonical situation like explained above. Remember, it’s such a simple line of code, but it can have catastrophic results.

3. Your 301s Aren’t Redirecting

Imagine you did your homework when redesigning or migrating your website. You mapped out a strong 301 redirection plan, worked with your developers on implementing that plan, and tested it thoroughly before releasing it to production.

But then rankings and traffic start to drop a month later. What happened?

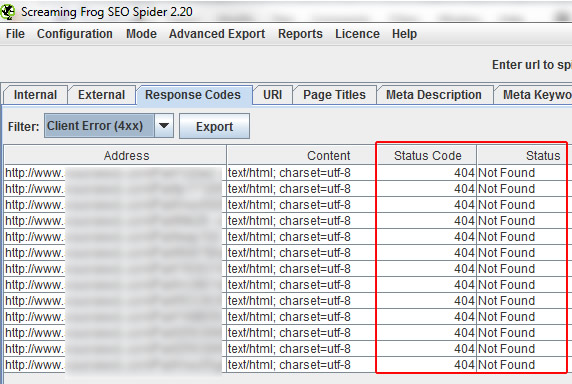

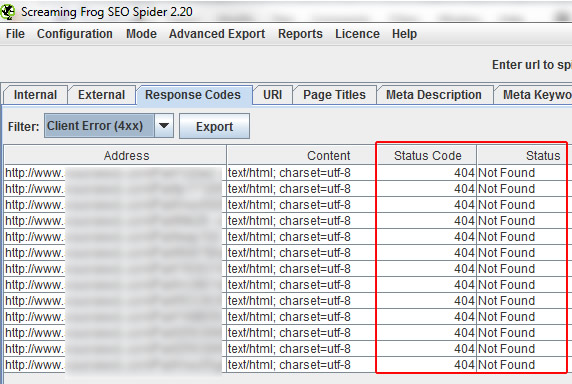

During audits, especially after redesigns or migrations take place, there are times I find all of the 301 redirects that were implemented are suddenly not working. When testing and crawling the top landing pages from the old site, they 404 (Page Not Found).

This could be caused by a number of technical problems, including coding changes that roll back redirects, database tables that bomb, coding changes that inhibit 301s from firing, etc. There are a number of audits I have conducted based on redesigns or migrations that reveal this problem.

Unfortunately, the migration doesn’t end when the new site goes live. You need to ensure the changes you implemented stick with the new site over time. If not, you risk losing search equity, which can impact rankings and organic search traffic.

Recommendation: Thoroughly test your 301 redirects before every release. Make sure new code changes will not roll back redirects, or bomb the 301 process. Keep a file of top landing pages from the old site and crawl those URLs periodically. This will ensure your 301s still 301. Remember, a link is a horrible thing to waste, and broken 301s could essentially destroy years of natural link building.

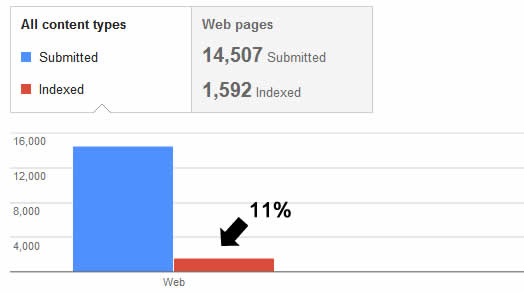

4. Your Sitemaps are Dirty

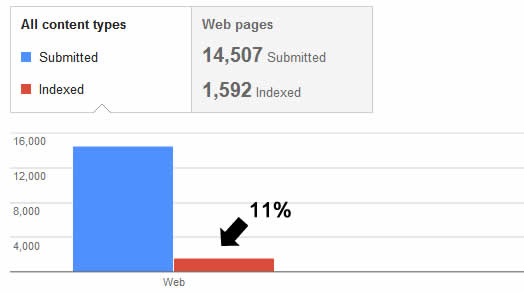

When submitting XML sitemaps, the last thing you want to do is feed the engines bad URLs. For example, providing URLs that 404, 302, 500, etc.

Sitemaps that contain bad URLs are called “dirty sitemaps”, and they can lead to the engines losing trust in those sitemaps. Duane Forrester from Bing explained that they have very little tolerance for dirty sitemaps.

Here’s a quote from an interview with Eric Enge:

Your Sitemaps need to be clean. We have a 1% allowance for dirt in a Sitemap. Examples of dirt are if we click on a URL and we see a redirect, a 404 or a 500 code. If we see more than a 1% level of dirt, we begin losing trust in the Sitemap.

Needless to say, you should only provide canonical URLs in your XML sitemaps (non-duplicate URLs that throw a 200 code).

During audits, I dig into the XML sitemap reporting in Google Webmaster Tools, while also crawling the sitemaps. During this process, I have found URLs that should never have been included in XML sitemaps.

When working with a client’s developers, there are times that the technical process for generating those sitemaps is flawed. That means every time the sitemaps are generated, they are being filled with URLs that bomb.

For example, one XML sitemap I crawled provided thousands of IP address-based URLs (versus using the domain name). From a canonicalization standpoint, your site shouldn’t resolve via its IP address, or you can run into a massive duplicate content problem. Now, webmaster tools flags this error, since you can’t submit pages that fall outside of the verified domain, but finding this problem helped my client on several levels.

First, we knew that a coding glitch was using IP address versus the domain name, and seeing the URLs helped us track down the problem. Second, my client was feeding the engines dirty sitemaps, which as Forrester explained, can result in a loss of trust. Third, we were able to revisit their XML sitemap strategy and form a stronger plan for how to handle the millions of URLs on the site.

Recommendation: Avoid dirty sitemaps at all costs. Don’t send the engines on a wild goose chase for URLs that don’t resolve correctly. Only provide canonical URLs in your XML sitemaps. I recommend having a process in place for routinely checking your XML sitemap reporting in webmaster tools, while also crawling your sitemaps periodically. Implementing that process can help you catch technical issues before they cause bigger problems.

Summary: Bad Signals Can Lead to Bad SEO

These four situations are great examples of how hidden technical problems could result in dangerous SEO signals being sent to the engines. And unless you uncover those problems, they can remain in place over the long-term, negatively impacting a site’s rankings and organic search traffic.

My final recommendation is to make sure you continually analyze your website from an SEO standpoint. Just because you implemented a change four months ago doesn’t mean it’s still working correctly.

Try and keep technical problems on your test server where they can’t hurt anyone or anything. And that will go a long way to maintaining your rankings and traffic.

Leave a Reply

You must be logged in to post a comment.