The latest Panda Update began rolling out the week of July 15 and was confirmed by Google. I was happy to see Google confirm the update, since that wasn’t supposed to happen anymore.

During the July update, many webmasters reported recoveries of varying levels, which seemed to match up with what Google’s Distinguished Engineer Matt Cutts explained about the “softening” of Panda. Also, this was the first rollout of the new and improved Panda, which can take 10 days to fully roll out.

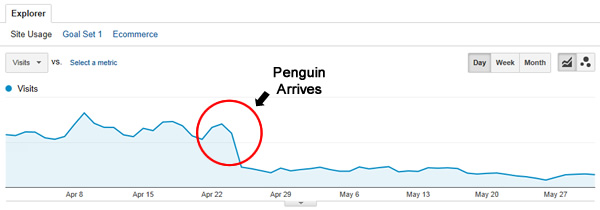

But remember, there were other significant updates in May, with both Penguin 2.0 and Phantom. Since many sites were hit by those updates as well, I was eager to see how the new Panda update impacted Penguin and Phantom victims as well.

And we can’t leave out “Phanteguin” victims (companies hit by both Phantom and Penguin 2.0). Could they recover during the Panda update too?

Needless to say, I was eager to analyze client websites hit by Panda, Phantom, Penguin, or the killer combo of Phanteguin. What I found was fascinating, and confirms some prior beliefs I had about the relationship between algorithm updates.

For example, I’ve witnessed Penguin recoveries during Panda updates before, which led me to believe there was some connection between the two. Well, the latest Panda update provides even more data regarding the relationship between Panda, Penguin, and even Phantom.

Pandas Helping Penguins, and Exorcising Phantoms

Past case studies about Penguin recoveries during Panda updates led me to believe that Panda and Penguin were connected somehow, although I’m still not sure exactly how. It almost seems like one bubbles up to the other, and if all is OK on both fronts, a site can recover.

Well, I saw this again during the latest Panda update, but now we have a ghostly new friend named Phantom who has joined the party.

During the latest Panda update, I saw several companies recover at varying levels from Panda (which makes sense during a Panda update), but I also saw websites hit by Penguin and Phantom recover. And to make matters even more complex, I saw a website hit by Phanteguin (both Phantom and Penguin) recover.

Today, I’m going to walk you through three examples of recoveries, so you can see that Pandas do indeed like Penguins, and Phantoms can be exorcised. My hope is that by providing a few examples of sites that recovered during the latest Panda update, you can start to better understand the relationship between algorithm updates, and how Panda is evolving.

Case 1: Panda Recovery – Fixing Technical Problems + The Softer Side of Panda

Several of my clients recovered from Panda hits during the July 15 update, and I’ll cover one particularly interesting one now. The website I’m referring to has seen a significant loss in traffic over the past several months. Based on a technical SEO audit I conducted, the company has performed a massive amount of work on the website over the past several months.

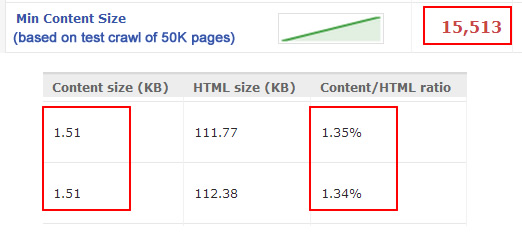

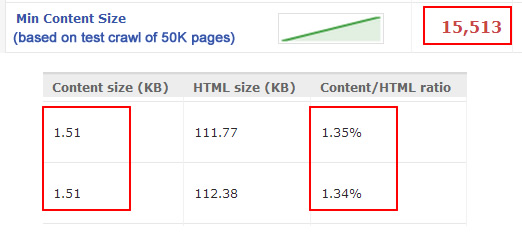

The site had a number of serious technical problems, and many of those problems have been rectified. It’s a large site with over 10 million pages indexed, and inherently has thinner content than any SEO professional would like to see. But, that’s an industry-wide issue for this client, so it was never fair that it was held against them (in my opinion).

The site also had technical issues causing duplicate content, massive XML sitemap issues (causing dirty sitemaps), and many soft 404s. A number of these technical problems led to poor quality signals being presented to Google, which eventually caused problems Panda-wise.

Thin Content Identified During a Test Crawl of 50K Pages

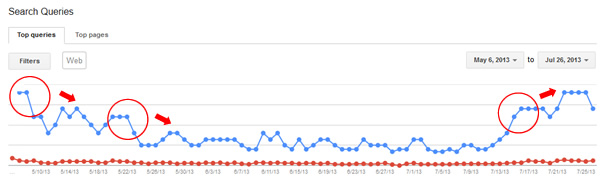

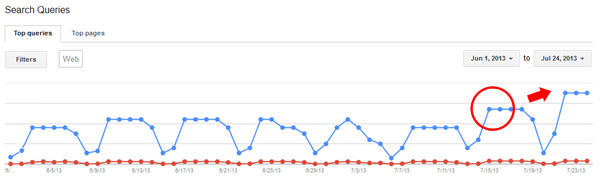

But on July 15, the site experienced a nice bump in Google organic traffic. Impressions more than doubled and Google organic traffic increased by 60 percent.

The site in question removed technical issues causing duplicate content, soft 404s, etc. To me, that was the real problem, as users still benefited from the content, even if the content was “thin.”

I also think this is a great example of the softening of Panda. The correction was the right one, and I’m glad to see that Google made the change to its algorithm.

To sum up this example, technical changes cut down on duplicate content and soft 404s, but the original content remained (and it was technically thin). The new Panda seemed OK with the content, and rewarded the site for fixing the issues sending dangerous quality signals to Google.

Case 2: Panda Recovery While Still Being Impacted by Penguin

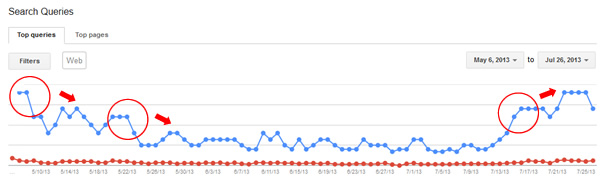

One site I analyzed after the latest Panda update saw a nice increase in Google organic traffic, even though it had gotten hammered by Penguin (and had not recovered from that algorithm update yet).

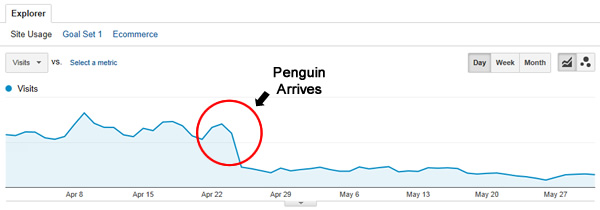

From a Panda perspective, the site got hit in November of 2012, losing approximately 42 percent of its already anemic Google organic search traffic. Then the site remained at that level until the latest Panda update in July 2013. Unfortunately, the website got hit by Pandeguin, or both Panda and Penguin.

Now, I’ve seen Penguin recoveries during Panda updates before, but I wasn’t sure if you could see a Panda recovery on a site being severely impacted by Penguin. This case confirms that you can recover from Panda even when you’re still being severely hampered by Penguin. The site in question got hammered during the first Penguin update (April 24, 2012), and lost ~65 percent of its Google organic traffic overnight.

The business owner worked hard to remove as many unnatural links as possible, and used the disavow tool, but didn’t recover during subsequent Penguin updates (even Penguin 2.0). Actually, the site saw another dip during Penguin 2.0.

July 15 arrived and the site recovered from Panda to its pre-Panda levels. Specifically, the website doubled its organic search traffic from Google, but it’s still below where it was pre-Penguin 1.0.

This makes complete sense, since the site shouldn’t return to its pre-Penguin levels since there are still more links to remove from my perspective. So, returning to pre-Panda levels make sense, but the site still needs more work Penguin-wise in order to recover.

To quickly recap, the website was impacted by Penguin 1.0 and 2.0, and hit by Panda in November 2012 (or Pandeguin). The webmaster worked hard to remove unnatural links, and used the disavow tool as well, but hasn’t removed as many links as needed to recover from Penguin.

The site didn’t recover during Penguin updates, but ended up recovering during the latest Panda update where it returned to its pre-Panda levels. It’s a great example of how a website could recover from Panda even when it’s still being impacted by Penguin.

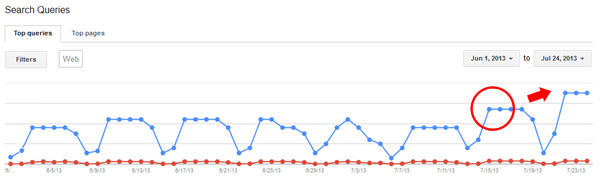

Case 3: Phanteguin Recovery – Penguin and Phantom Recovery During a Panda Update

This might be my favorite case from the latest Panda update.

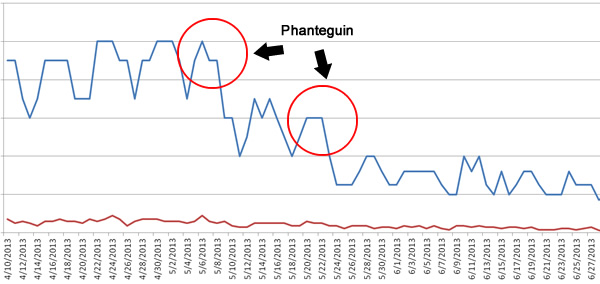

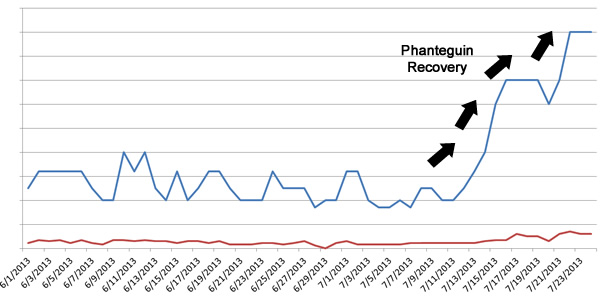

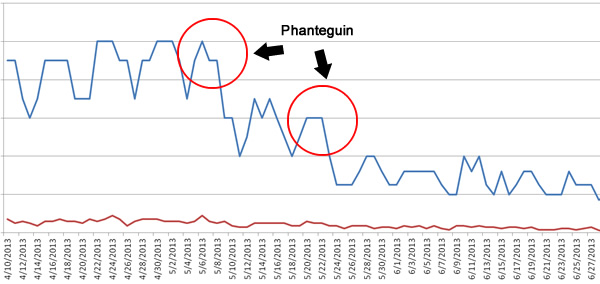

A client I’m helping was hit by Penguin 1.0 in 2012 and never recovered. Then they were impacted by Phantom on May 8, and hit even harder by Penguin 2.0 on May 22.

When you’ve been hit by Phanteguin, it means you have both a content and unnatural links problem. So you have to fight a battle on two fronts.

This case, in particular, shows how hard work and determination can pay off when you are hit by an algorithm update (or multiple algo updates).

My client moved really fast to rectify many of the problems riddling the website. They attacked their unnatural links problem aggressively and began removing links quickly. It’s worth noting that the site’s link profile was in grave condition (and that’s saying something, considering I’ve analyzed more than 220 websites hit by Penguin).

From a content perspective (which is what Phantom attacked), they had a pretty serious duplicate content problem. Their content wasn’t thin, but the same content could be found across a number of other pages on the site (and even across domains).

In addition, there was a heavy cross-linking problem with company-owned domains (using exact match anchor text). That’s another common problem I’ve seen with both Phantom and Panda victims.

A large percentage of the problematic content was dealt with quickly, which again, is a testament to how determined the owner of the company was to fixing the Phanteguin situation.

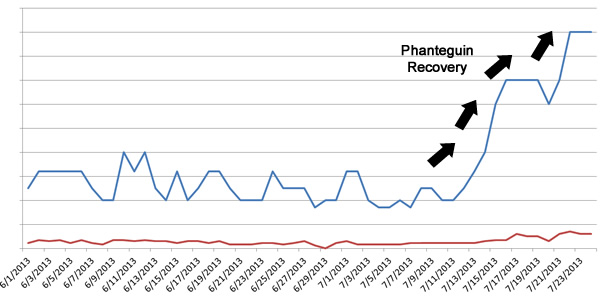

On July 15, the site began its recovery (and has been increasing ever since). The site is now close to its pre-Phanteguin levels. And once again, the reason it’s not fully back is because it shouldn’t have been there in the first place. Remember, there was an unnatural link situation artificially boosting its power, including unnatural links from company-owned domains.

So, this case study shows that Penguin recoveries can occur outside of official Penguin updates, and it also shows that recovery from Phantom-based content issues is possible during Panda updates.

It’s important to understand that this is going on. Nobody outside Google knows exactly how the algorithm updates are connected, but I’ve now seen them connect several times (and now Phantom is involved).

What Didn’t Work

Now we’ve examined what worked. But what didn’t work for companies trying to recover?

July 15 wasn’t a great day for every website impacted by Panda, Phantom, or Penguin. Here’s why:

1. Lack of Execution

If you’ve been hit by an algorithm update, and don’t make significant changes, then there’s little chance of recovering (at least to the extent that you desire).

I know a number of companies that were reluctant to implement major changes, including gutting content, refining their structure, changing their business model, and tackling unnatural links. They didn’t recover, and it makes complete sense to me that they didn’t.

There are tough decisions to make when you’ve been hit by Panda, Phantom, or Penguin. If you don’t have the intestinal fortitude to take action, then you probably won’t recover. I saw several cases of this during the July 15 update.

2. Rolling Back Changes and Spinning Wheels

I know a few companies that actually implemented the right changes, but ended up rolling them back after having second thoughts. This is one of the worst things you can do.

If you roll out changes, but don’t wait for another update, then you will have no idea if those changes worked. Worse, you are sending really bad signals to the engines as you change your structure, content, internal linking, etc., only to roll it back a few weeks later.

You must make hard decisions and stick with them in order to gain traction. Do the right things SEO-wise and solidify your foundation. That’s the right way to go.

3. Caught in the Gray Area

I’ve written about the gray area of Panda before, and it’s a tough spot to be in. If you don’t change enough of your content, or remove enough links, then you can sit in algorithm update limbo for what seems like an eternity.

You should fully understand the algorithm update(s) that hit you, understand the risks and threats with your own site, and then execute changes quickly and accurately. This is why I’m very aggressive when dealing with Panda, Phantom, and Penguin. I’d rather have a client recover as quickly as possible and then build on that foundation (versus getting caught in the gray area).

Key Takeaways

If you’re still dealing with the impact of an algorithm update (whether Panda, Phantom, or Penguin, or some combination of the three), hopefully these takeaways will help you get on the right path.

- Have a clear understanding of what you need to tackle. Don’t be afraid to make hard decisions.

- Move fast. Speed, while maintaining focus, is key to successfully recovering from an update.

- Clean up everything you can, including both content and link issues. Don’t just focus on one element of your site, when you know there are problems elsewhere. Remember, one algorithm update may bubble up to another, so don’t get caught in a silo.

- Stick with your changes. Don’t roll them back too soon. Have the intestinal fortitude to stay the course.

- Build upon your clean (and stronger) foundation with the right SEO strategy. Don’t be tempted to test the algorithm again. It’s not worth it.

Summary: Analyze, Plan, and Execute

Like many other things in this world, success often comes down to execution. I’ve had the opportunity to speak with many business owners who have been impacted by Panda, Phantom, and Penguin. I’ve learned that it’s one thing to say you’re ready to make serious changes, and another to execute those changes.

To me, it’s critically important to have a solid plan, based on thorough analysis, and then be able to execute with speed and accuracy. Working toward recovery is hard work, but that hard work can pay off.

Leave a Reply

You must be logged in to post a comment.