It isn’t uncommon to hear that Google has made a mistake. It is, however, rare to discover that buried deep within Google’s best practices documentation (for mobile), a potentially explosive burden lies dormant. One that can negatively affect page load speed, bounce rates, and potentially rankings.

The Mistake

This issue is applicable to any site using a Content Delivery Network (CDN) like Akamai, and also serving special HTML or CSS for mobile depending on the user-agent of the device requesting the page. When a site serves special content on the same desktop URL, Google says it:

“…strongly recommends that your server also send the Vary (User-Agent) HTTP header on URLs that serve automatic redirects. This helps with ISP (Internet Service Provider) caching and is another signal for Googlebot and our algorithms to discover and understand your website’s configuration.”

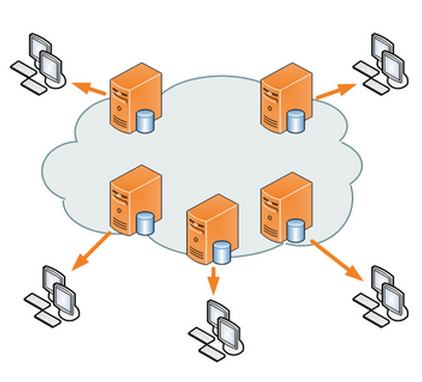

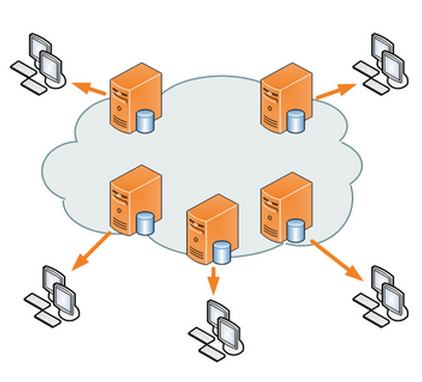

The problem is Google didn’t take into consideration is the role of the CDN in the equation. Most websites depend on a CDN because they can serve content faster by having cached copies of a website on high-performance servers across the country (or world).

As it turns out, implementing the Vary User-Agent HTTP header effectively turns off the caching of pages. This means a CDN would be worthless and a surplus of requests would begin hitting a site owner’s server(s).

Unplanned stress on servers can lead to slower page load speeds, poor user behavior metrics, and could even affect rankings.

Finger Pointing

While Google is to blame for not taking this into consideration in its recommendation or taking action from the feedback they’ve been given, it isn’t entirely Google’s fault. There are a couple of tough factors at play here.

- The user-agent string mess. There are so many user-agents that asking a CDN to store a special version of a page for each user-agent might be too much of a burden. The question should be asked: couldn’t they limit storing copies of pages for only the user-agents that matter? Seems like functionality that needs to be built on the CDN side of things.

- If there were multiple copies of each page stored, it could result in the wrong version of a page getting sent to a user, according to Akamai. This includes sending a page in the wrong language, which is a risk Akamai’s apparently not willing to take. Yet again, seems like lack of functionality a CDN should take care of.

While these two issues make sense, it does seem that a solution could be reached if the right people were working together on both sides.

This entire problem might be another indication of mobile growing pains. Google wants another signal to indicate when it should try to find mobile content and CDNs don’t want the extra burden of caching additional copies of content.

The Solution

If you’re dynamically serving different HTML on the same URL based on user-agent, ask your CDN how it handles the Vary: User-Agent HTTP Header. Most likely the CDN says it doesn’t handle it at all and this issue can be prevented. Perhaps you can pioneer the fix!

Meanwhile, if Google’s already crawling your content with Googlebot-Mobile, it’s already aware of your configuration. See if Google is crawling your mobile content by checking log files for Googlebot-Mobile. It can be found within a user-agent string like the one below:

Mozilla/5.0 (iPhone; U; CPU iPhone OS 4_1 like Mac OS X; en-us) AppleWebKit/532.9 (KHTML, like Gecko) Version/4.0.5 Mobile/8B117 Safari/6531.22.7 (compatible; Googlebot-Mobile/2.1; +http://www.google.com/bot.html)

Leave a Reply

You must be logged in to post a comment.