You know you’ve hit the big time when you end up in a feature article in Wired. That’s what happened when Brian Christian wrote about the A/B test — the technology behind some of the biggest e-commerce online.

A/B testing is hitting the mainstream because it is so effective. And with so many tools available it has become very easy and very inexpensive to run.

But talk to most marketers and they only have a basic understanding of the art of A/B testing. They haven’t quite figured out how to take it to the next level.

That’s what this article is all about: helping you become a badass A/B tester with the following 23 tips I’ve picked up in my experience in the field.

Let’s go.

1. Test Your Software

Not all A/B testing software is the same. For example, Neil Patel said that he would run A/B tests where there were swings as high as 40 percent. That’s a significant difference that might lead you to believe you are on to a winner. But Neil discovered that the revenue wasn’t changing based on the increased conversion, so he suspected that there was something wrong with the software.

How do you tell if software is accurate? Run an A/A test.

In other words, test the control against the control. If you see a significant difference in results, then you can be sure that your software is inaccurate.

2. Minimize Friction

Friction is any element on your landing page that slows down conversion. It’s the staple for good A/B testing—the thing that you must look for immediately. Here’s how we do it at Treehouse:

What should you look for? Here are classic elements that cause friction:

- Form Fields – Forms should be simple to fill out. The more information you request the slower the process. Make sure you can justify ever piece of information you are asking for.

- Process Steps – The more steps the slower the process. Like forms, every step should justify its existence. If you can eliminate it, eliminate it.

- Page Length – A long page may not cause friction since you provide all of the essential information below the fold. Even then you should always test page length.

Any element of friction will pull down your conversion rates. Tweak the obvious and then A/B test. But as you’ll see you should even test anything that becomes conventional wisdom. I’ll explain in a few minutes.

3. Information-Entering Anxiety

While this is hard to test for, you can usually spot where this is a problem by a pattern in abandonment at a certain step in the process.

Anxiety is created when people aren’t sure if they are going to be rewarded for all of their work. If they suspect that the process steps aren’t useful, they may bail. You see this happen a lot on pages that request a lot of information for an exchange of low value (email newsletter, for example). Surveys also cause anxiety. The longer the survey the more drop out you will have.

However, if people feel like they are going down the right path, it doesn’t matter how many steps there are in the process. As long as they are following the right scent they will be happy to click away and fill out forms.

4. Clarity Trumps Persuasion

This might upset some sales copywriters, but persuasion isn’t what always works the best. Sometimes it’s nothing more than being insanely clear. This means that you can avoid a lot of the language of influence by answering these three questions:

- Where am I? Make it clear where your visitor is in the sales process.

- What can I do here? Make it clear what you want the visitor to do. One page, one goal.

- Why should I do it? The value proposition should be obvious. Your visitor shouldn’t have to guess what he should do—neither should he guess why. Make it clear.

5. Interview for Insights

An A/B badass knows that one of his first jobs is to sit down with the client and the ideal customer and listen to what they have to say. It’s not all about software.

This is the best way to collect facts and feedback. It’s also hard to go wrong when you observe behavior and see how customers walk through your landing page. After that, pull them aside and listen to customers to why they did what they did. That action will lead to some deep insights.

6. Learn from Pricing Takeaways

Nailing down an acceptable price is probably one of the hardest things to figure out. This is why you need to build hypothesis with real data—and then test those hypothesis with an A/B test (don’t forget to run an A/A test to calibrate your software).

But when testing price you have to understand that it’s not all about the numerals. Customers care more about the value that you provide behind that price—so tinker with your sales copy, the bullets and ask yourself if you are showing great value.

It’s more important to base price on what your customer thinks it is worth and not how much it cost to create and operate.

7. Learn from Higher Prices

Never assume that lower prices will raise sales. Nor never assume that raising prices will drop sales. You may have your prices too low for the value you are providing so customers walk away thinking that you are hyping up a poor product. If you raise prices to match the value, then you might see sales jump with higher prices.

Work in small increments and batches. For example, start with a 2 percent increase in price against the control, document change and then try another 2 percent on top of that. Small incremental changes will keep your customers from feeling like you are overcharging.

8. Test Social Features

I see a lot of social share buttons on product pages. I’m not sure why they are there—did the company test to see if they raise or suppress sales?

An interesting study done by Empirica Research found that like and tweet buttons suppressed shares on a Clearasil skin product page by up to 25 percent. Some products are just too sensitive to think about sharing, so that’s a huge decrease in sales from just adding a like or tweet button.

Appropriately Amazon puts social buttons after the order:

And you can bet they tested that.

9. Lower Your AdWords Position

Common sense might tell you that the higher your AdWords position the more traffic you will drive to your landing page. You’ll hear me say this more than once, but all A/B testing badasses know that you should always test common sense.

Besides, a difference between position one and two, or two and three could only be a half a percentage point—but a huge divide might exist between the bid prices for those positions. In other words, you might get a half a percent less traffic for a lower position but your cost is more than 50 percent –and if you calculate your ROI you’ll realize which one is more effective. Moral of the story: test all of your assumptions.

10. Test All Best Practices

Best practices arise because enough tests have been performed that have returned similar results. Some of the more common A/B testing dogma you’ll hear is that headline copy should match your text ad copy or endorsements will raise your trust levels for the landing page.

While those standards are true in most cases you should always analyze your elements. Sure, use best practices when you don’t have the time. When you have the time, however, or when exceptional results are critical, take the time to test all elements—even if you think you know the answer.

11. Employ Small Tests When Under the Gun

When you don’t have time to be comprehensive you need to isolate effective changes with small tests. For instance, using software like Silverback can allow you to record a person using an ecommerce site and get useful data within five minutes. I’ve seen short test like that results in cart conversion increases from up to 13 percent. Think about it: 5 minutes work for 13 percent increase. That happens when you solve real customer problems.

12. Don’t Ignore the Conversion Trinity

A/B testing badasses know that they can do some serious CRO improvement when they enhance one or all of the elements in what are called the Conversion Trinity:

- Relevance: Is your landing page relevant to the visitor’s wants or needs? Are you maintain the scent? Are you being consistent?

- Value: Is your value proposition answering all of the meaningful questions that the visitor is asking? Will your solution provide them with the right benefits?

- Call to action: Is it clear what the visitor needs to do next? Can they perform that action with confidence—or are they confused?

This is all about customer fit.

13. Test the Message

While making micro site changes can improve conversion you shouldn’t ignore macro issues. For example, testing the difference between “Join Now” versus “Buy Now” is a good idea; you can also see dramatic changes in conversion by testing the pages entire message.

Is the message from the headline to the first sentence to the call-to-action consistent? Do the designs and images reinforce the message?

Sometimes it’s healthy when A/B testing to step back from the trees and look at the forest. You might be surprised by the insights that you find.

14. Design with On Page and One Goal

Every A/B testing badass will tell you that the best conversion advice you can ever give is to focus on one goal per page. And that doesn’t take any complicated analytic software to figure out. It should be plain with a quick glance.

15. Test Appeals in Your Headline

Knowing your customer is commandment number one when it comes to testing. And knowing what appeals to them is essential. But often when you ask them they won’t be able to tell you—that is until they actually see it.

What do I mean by appeal? I mean benefits that resonate with them. Often products provide numerous solutions to a client—but only the biggest and best should be presented in the headline. The problem is that we often think we know what benefit is the biggest and best—and we are often wrong.

You can bet that Optimizely tested headline appeals. Here’s their current favorite:

Make a list of every single benefit your product provides. Then test those in the headline. Stick with the winner.

16. Test Insanely Small Elements

AJ Kohn tells a story of how back in 2007 that he tested the difference between using initials in the URL of a Google AdWords test versus not using initials: www.YourDomain.com versus www.yourdomain.com.

The results surprised him: the URL with the capitals beat out the non-initial URL by 53 percent. That’s huge.

Now Google doesn’t allow that sort of customization but it goes to show that big improvements in conversion can be achieved in seemingly small changes.

17. Violate the Sacred

Want to see some unexpected and big changes? Then test things that you know should not be tested.

Let’s say you have a tightly optimized page. Most people would dust their hands of testing thinking they’ve nailed it. But you might be surprised at how much more conversion you can squeeze out of a page by taking a list of the best practices—things that should never be violated—and violate them.

18. Don’t Be Perfect

Often conversion experts think they have to produce perfect landing pages. But that’s not the goal of A/B testing. Conversion is built on making things better than they were before—not perfect.

For example I’ve seen tests where clients have had ugly landing pages and by simply creating a new page with a new design and a clear call-to-action—not a perfect page by any stretch of the imagination—but a page that was just better than the old one, and seen profit jump by 75 percent.

The trick is not to go back to that page and test some more. In other words, create a minimum viable landing page that is better than the old one—and start testing from there.

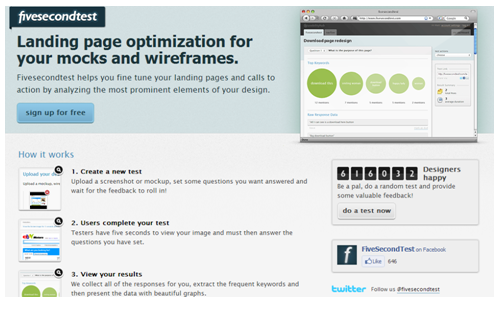

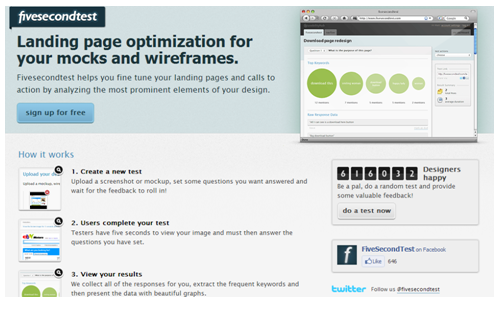

19. Look for Cost-Effective Ways to Test

Slick A/B testers aren’t all about using the latest software technology or employing sophisticated methods to testing. It’s often just finding out the improvements through paper prototyping or running a 5-second test of a wireframe.

You don’t need a polished product to test nor do you need to always use complex technology. Never discount tests that are cheap or simple. Better yet, perform these cheap and simple tests, then validate the results in the web browser.

20. Let a Client Contradict A/B Testing

Successful A/B testing is a balance between proven optimization techniques and information you get from clients. It’s really all about testing assumptions—your client’s and yours.

I remember one instance where there was a doubt that trimming excessive content would improve the landing page conversion rate. While the client admitted in not testing to prove this assumption, they shared plenty of anecdotal evidence that said their approach was working.

We came into test using over conversion techniques and testing usability against excess content. To our surprise the client was right—excess content pushed the conversion rate higher. Our next step was to figure why that was the case.

21. Wait Until the Test Is Over

If there is any one single lesson to learn from A/B testing it has to be this: it’s hard to predict results. Even when using our proven assumptions we are often surprised by the results.

One time I was working on a nine-month project where we churned out over 200 pieces of content. I think I got pretty good at predicting what headlines would work—I was right probably about half the time, meaning it was no better than a coin flip. Part of the problem was that I was going off early information—I wasn’t waiting until more results came in.

22. Never Trap a User

This falls in the category of best practices, but it’s so important I wanted to isolate it in its own section. Common sense with landing page conversion would say to remove all escape routes on that page. This would mean limit the links and eliminate navigation.

You can bet Copyblogger Media tested navigation versus no navigation. See which one won?

Unfortunately I’ve seen into many tests that when you block off all exits people simply abandon the page. When visitors feel trapped they get nervous and bail. Again, this is something that you should test and not simply affirm because it is a best practice.

23. Always Be Testing

Finally, A/B testing badasses won’t accept the status quo. They’ll challenge every fundamental by testing it because they know that just because something has worked over 60 percent of the time of 70 percent of the sites doesn’t mean that it will work on their site. They treat every site or page as a unique and test the best standards.

And even when they’ve established controls they will eventually get around to re-testing those controls. They know that every single control must justify itself. There are no free lunches and no sacred cow.

Your Turn

Do you have any unusual tips that can make someone a A/B testing badass? If so, please share them in the comments.

Editor’s note: This column originally was published on November 15, 2012, and comes in at No. 6 on our countdown of the 10 most popular Search Engine Watch columns of 2012. As the clock ticks down to 2013, we’re celebrating the Best of 2012 by revisiting our most popular columns, as determined by our readers. Enjoy and keep checking back!

Leave a Reply

You must be logged in to post a comment.