There are plenty of reasons why you’d want Googlebot to recrawl your website ahead of schedule. Maybe you’ve cleaned up a malware attack that damaged your organic visibility and want a clean bill of health so rankings recover faster; or maybe you’ve implemented site-wide canonical tags to eliminate duplicate content and want these updates sorted out quickly; or you want to accelerate indexing for that brand new resources section on your site.

There are plenty of reasons why you’d want Googlebot to recrawl your website ahead of schedule. Maybe you’ve cleaned up a malware attack that damaged your organic visibility and want a clean bill of health so rankings recover faster; or maybe you’ve implemented site-wide canonical tags to eliminate duplicate content and want these updates sorted out quickly; or you want to accelerate indexing for that brand new resources section on your site.

To force recrawls, SEOs typically use tactics like resubmitting XML sitemaps, or using a free ping service like Seesmic Ping (formerly Ping.fm) or Ping-O-Matic to try and coax a crawl, or firing a bunch of social bookmarking links at the site. Trouble is, these tactics are pretty much hit or miss.

Good news is, there’s a better, more reliable way to get Googlebot to recrawl your site ahead of your standard crawl rate, and it’s 100 percent Google-endorsed.

Meet “Submit URL to Index”

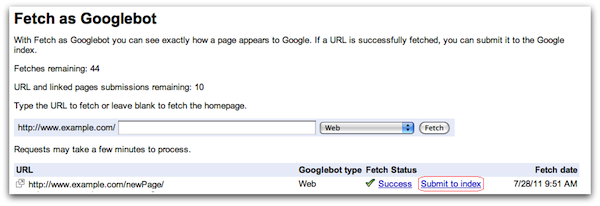

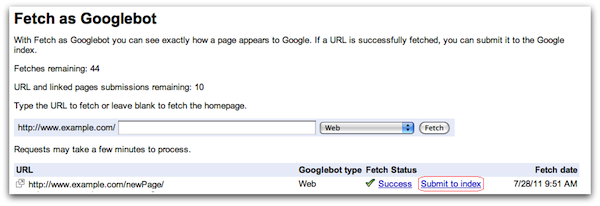

Last year, Google updated “Fetch as Googlebot” in Webmaster Tools (WMT) with a new feature, called “Submit URL to Index,” which allows you to submit new and updated URLs that Google themselves say they “will usually crawl within a day.”

For some reason, this addition to WMT got very little fanfare in the SEO sphere, and it should have been a much bigger deal that it was. Search marketers should know that Submit URL to Index comes as advertised, and is very effective in forcing a Google recrawl and yielding almost immediate indexing results.

Quick Case Study and Some Tips on Using “Submit URL to Index”

Recently, a client started receiving a series of notifications from Webmaster Tools about a big spike in crawl errors, including 403 errors and robots.txt file errors. These types of preventive measure alerts from WMT are relatively new and part of Google’s continued campaign to give site owners more visibility into their site’s performance (and diagnose performance issues), which started with the revamping of the crawl errors feature back in March.

Almost immediately, organic traffic and SERP performance began to suffer for the client site, which is to be expected given the number of error notices (five in three days) and the rash of errors cropping up.

Here’s the final email sent to the client from WMT:

Google being unable to access the site was the real tip off here, and it turned out that the client’s developer had inadvertently blocked Google’s IP.

In the past, technical issues like the one above might take days to discover. But with these new crawl error notifications are a real Godsend and saved us a ton of time and effort trying to isolate and diagnose the issues, making my life easier and helping greatly reduce the amount of time it takes to solve issues. This means we spend less time fighting fires and more time on progressive SEO efforts.

After the developer removed the block on Google’s IP and we felt the issue was solved, we wanted to force a recrawl. To do this, you first need to submit URLs to used the “Fetch as Googlebot” feature and get diagnostic feedback on or either Google’s success or error when attempting to fetch the URL.

If Google is able to fetch the URL successfully, you’re then granted access to use the “Submit URL to Index” feature.

Here are a couple of tips when using this feature:

- Select “URL and all linked pages” vs “URL” when submitting for a recrawl. This designates the URL you submit as the starting point for a crawl and includes a recrawl of all internal links on that page and whole interlinked sections of sites.

- You can also force Google to crawl URLs that aren’t in your error reports by going to “Fetch as Googlebot” and plugging in any URL on your site. FYI you can leave the field blank if you want Google to use the home page as a starting point for a recrawl.

- When choosing additional URLs to crawl, submit pages that house the most internal links so you’re “stacking the deck” in trying to force as deep a crawl as possible on as many URLs as possible: think HTML site map and other heavily linked-up pages.

Keep in mind that Google limits you to ten index submissions per month, and that’s per account. So if you host a number of client sites in the same WMT account, be aware and use your submits sparingly.

After forcing your recrawls, you want to return to the crawl errors screen and select the offending category (in this case it was the access denied tab) and “mark as fixed” and either individually select the URL or select all.

Now it’s worth noting that there may be a system lag with some of these notices. So even after you’ve made fixes, you may still get technical error notices. But if you’re confident you’ve solved all the issues, just repeat the process of marking URLs as fixed until you get a clean bill of health.

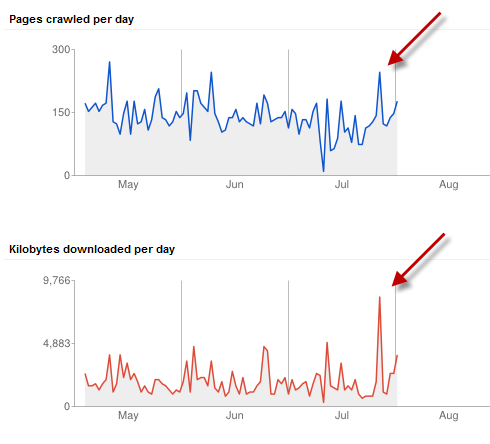

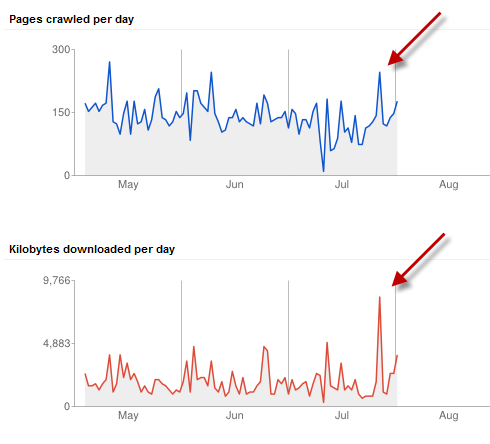

The day after forcing a Google recrawl of the client’s site we saw an immediate spike in crawl activity in Webmaster Tools.

As a result, we were able to solve the issue in a few days and traffic rebounded almost immediately. I also believe that submitting multiple “internal link hub” type URLs for Google to crawl — including the HTML site map and an extensively linked-up resources page — really helped speed up recovery time.

Final Thoughts on Submit to Index and Crawl Error Alerts

All of these feature upgrades in Webmaster Tools — like the crawl error alert notifications — are really instrumental in helping SEOs and site owners find and fix technical issues faster.

With Submit to Index, you no longer having to wait around for Googlebot to crawl your site and discover your fixes. You can resolve technical issues faster, leading to less SERP interruption and happier clients.

There are plenty of reasons why you’d want Googlebot to recrawl your website ahead of schedule. Maybe you’ve cleaned up a malware attack that damaged your organic visibility and want a clean bill of health so rankings recover faster; or maybe you’ve implemented site-wide canonical tags to eliminate duplicate content and want these updates sorted out quickly; or you want to accelerate indexing for that brand new resources section on your site.

There are plenty of reasons why you’d want Googlebot to recrawl your website ahead of schedule. Maybe you’ve cleaned up a malware attack that damaged your organic visibility and want a clean bill of health so rankings recover faster; or maybe you’ve implemented site-wide canonical tags to eliminate duplicate content and want these updates sorted out quickly; or you want to accelerate indexing for that brand new resources section on your site.

Leave a Reply

You must be logged in to post a comment.