It all starts with Google, doesn’t it? Not really – it’s all about Google today because Google is the most used search engine.

Google, like any other software, evolves and corrects its own bugs and conceptual failures. The goal of the engineers working at Google is to constantly improve its search algorithm, but that’s no easy job.

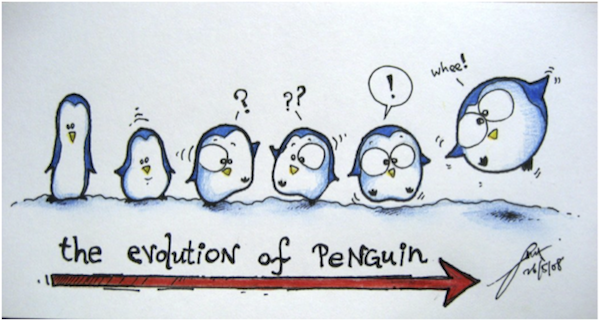

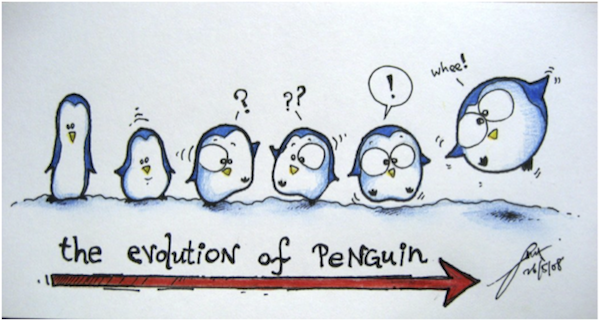

Google is a great search engine, but Google is still a teenager.

This article was inspired by my high expectations of the Google algorithm that have been blown away in the last year, seeing how Google’s search results “evolved.” If we look at some of the most competitive terms in Google we will see a search engine filled with spam and hacked domains ranking in the top 10.

Why Can Google Still Not Catch Up With the Spammers?

Panda, Penguin, and the EMD update did clear some of the clutter. All of these highly competitive terms have been repeatedly abused for years. I don’t think there was ever a time when these results were clean, in terms of what Google might expect from its own search engine.

Even weirder is that the techniques used to rank this spam are as old as (if not older than) Google itself. And this brings me to a question:

The only difference between now and then is the period of time a spam result will “survive” in the SERPs. Now it’s decreased from weeks to days, or even hours in some cases.

One of the side effects of Google’s various updates is a new business model: ranking high on high revenue-generating keywords for a short amount of time. For those people involved in this practice, it scales very well.

How Google Ranks Sites Today: A Quick Overview

These are two of the main ranking signal categories:

- On-page factors.

- Off-page factors.

On-page and off-page have existed since the beginning of the search engine era. Now let’s take a deeper look at the most important factors that Google might use.

Regarding the on-page factors Google will try to understand and rate the following:

- How often a site is updated. A site that isn’t updated often doesn’t mean it’s low quality. This just tells Google how often it should crawl the site and it will compare the update frequency to other sites’ update frequency in the same niche to determine a trend and pattern.

- If the content is unique. Duplicate content matching applies a negative score)

- If the content provides interest to the users. Bounce rate and traffic data mixed on-page with off-page).

- If the site is linking out to a bad neighborhood.

- If the site is inter-linked with high-quality sites in the same niche.

- If the site is over-optimized from an SEO point of view.

- Other various smaller on page related factors.

The off-page factors are mainly the links. The social signals are still in their infancy and there is no exact study yet that clearly shows a true correlation of the social signals without being merged with the link signal. It is all speculation until now.

Talking about links they could be easily classified in two big categories:

- Natural. Link appeared as a result of:

- The organic development of a page (meritocracy).

- A result of a “pure” advertising campaign with no intent of directly changing the SERPs.

- Unnatural. Link appeared:

- With the purpose to influencing a search engine ranking.

Unfortunately, the unnatural links represent a very large percentage of what the web is today. This is mainly due to Google’s (and the other search engines’) ranking models. The entire web got polluted because of this concept.

When your unnatural link ratio is way higher than your natural (organic) link ratio, it raises a red flag and Google starts watching your site more carefully.

Google tries to fight the unnatural link patterns with various algorithm updates. Some of the most popular updates, that targeted unnatural link patterns and low quality links, are the Penguin and EMD updates.

Google’s major focus today is on improving the way it handles link profiles. This is another difficult task, which is why Google is having a hard time making its way through the various techniques used by SEO pros (black hat or white hat) to influence positively or negatively the natural ranking of a site.

Google’s Stunted Growth

Google is like a young teenager stuck on some difficult math problem. Google’s learning process apparently involves trying to solve the problem of web spam by applying the same process in a different pattern – why can’t Google just break the pattern and evolve?

Is Google only struggling to maintain an acceptable ranking formula? Will Google evolve or stick with what it’s doing, just in a largely similar format?

Other search engines like Blekko have taken a different route and have tried to crowdsource content curation. While this works well in a variety of niches, the big problem with Blekko is that this content curation is not too “mainstream” putting the burden of the algorithm on the shoulders of its own users. But the pro users appreciate it and make the Blekko results quite good.

In a perfect, non-biased scenario, Google’s ranked results should be:

- Ranked by non-biased ranking signals.

- Impossible to be affected by third parties (i.e., negative SEO or positive SEO).

- Able to tell the difference between bad and good (remember the JCPenny scandal).

- More diverse and impossible to manipulate.

- Giving new quality sites a chance to rank near the “giant” old sites.

- Maintaining transparency.

There is still a long way to go until Google’s technology evolves from the infancy we know today. Will we have to wait until Google is 18 or 21 years old – or even longer – before Google reaches this level of maturity that it dreams of?

Until then, the SEO community is left with testing and benchmarking the way Google evolves – and maybe try to create a book of best practices about search engine optimization.

Google created an entire ecosystem that started backfiring a long time ago. They basically opened the door to all the spam concepts that they are now fighting today.

Is this illegal or immoral, white or black? Who are we to decide? We are no regulatory entity!

Conclusion

Google is a complicated “piece” of software that is being updated constantly, with each update theoretically bringing new fixes and improvements.

None of us were born smart, but we have learned how to become smart as we’ve grown. We never stop learning.

The same applies to Google. We as human beings are imperfect. How could we create a perfect search engine? Are we able to?

I would love to talk with you more. Share your thoughts or ask questions in the comments below.

Image Credit: ordinarypoet.blogspot.ro