When I start helping companies that have experienced a serious drop in organic search traffic, I almost always begin the engagement by conducting a thorough SEO audit. Whether it’s Panda, a botched redesign or CMS migration, or a slow decline in rankings over time, audits are critically important to understanding the root causes of the drop.

As part of those audits, I’m typically crawling client websites multiple times, and via multiple tools. I don’t want to leave any stone unturned, especially since serious technical problems can often lie below the surface. And those technical problems can be causing serious SEO issues.

Once an audit is completed, the results are presented, along with a remediation plan. This ensures that everyone involved is on the same page with what needs to change (including c-level executives, developers, designers, content producers, PMs, etc.)

This should be the point where the recovery process begins.

But there are times that changes don’t get implemented correctly. This is typically due to a breakdown in communication (which is common when SEO is new to key members of the team that will be executing changes). When this happens, companies run the risk of spinning their wheels, or worse, the situation could spawn more SEO problems.

This is why it’s critically important for SEOs to not just audit websites, but to continually check and recrawl those sites to ensure all changes are implemented correctly. If not, your shiny audit won’t be worth the PowerPoint slides it’s written on.

How do you ensure changes are implemented correctly? Enter the recrawl, and the power of hunting down problems before they cause serious issues.

Recrawling is Critically Important For Catching SEO Problems Early

Recrawls provide an important step in the recovery process.

Let’s face it, nobody is perfect. There are always several ways to implement technical changes. I know, I used to do a lot of web application development. And for developers, what might seem like a smart way to implement technical changes, could actually send a website down a strange and dangerous path towards SEO disaster.

The recrawl can stop this from happening, even when flawed changes are at the brink of being released to production.

5 Examples of How Recrawls Nipped SEO Problems in the Bud

What follows are five examples of how actual recrawls identified serious SEO problems. Hopefully these examples will demonstrate to you the power of the recrawl.

1. Cloaking an Over-Optimized Navigation

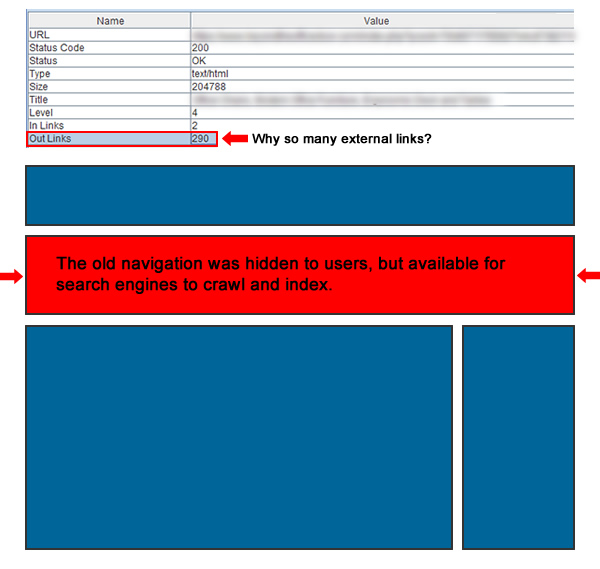

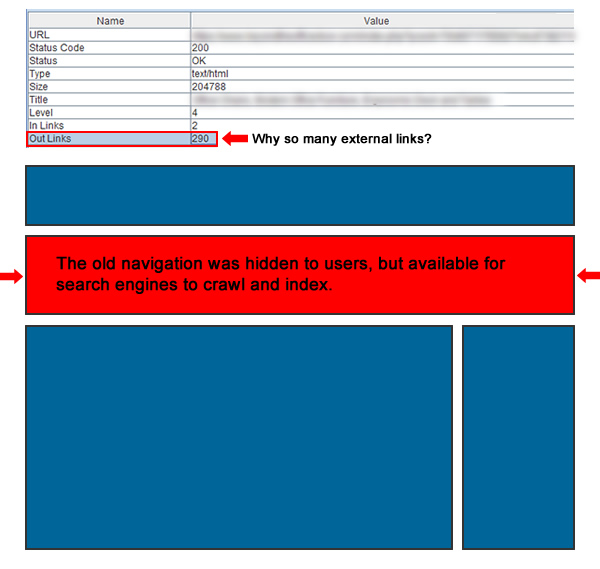

After performing a comprehensive audit, I recommended several changes to the core navigation of a large website (over 10 million webpages). There were simply too many links in the navigation, including over-optimized links. My recommendation was to streamline the navigation, provide a contextual navigation throughout the site, and overall cut down on how heavy the nav was.

I received an email from my client that some of my audit recommendations were implemented, so I decided to recrawl the site to check those changes across a number of categories and pages. But the crawl revealed that the full navigation (in its old form) was still present on the site.

So, I manually started checking certain pages and could not see the nav at all. But checking the source code revealed a huge problem. The dev team decided to simply hide the old navigation versus removing it.

All of the links, including over-optimized links, were sitting there for the bots to crawl, but they weren’t accessible to users. The site was now cloaking, and across 10 million pages.

Thankfully, the recrawl was performed as soon as the new changes went live. I was able to quickly contact my client’s dev team, they implemented a quick fix, and pushed those changes to production the same day.

2. Hello Again, Followed Affiliate Links

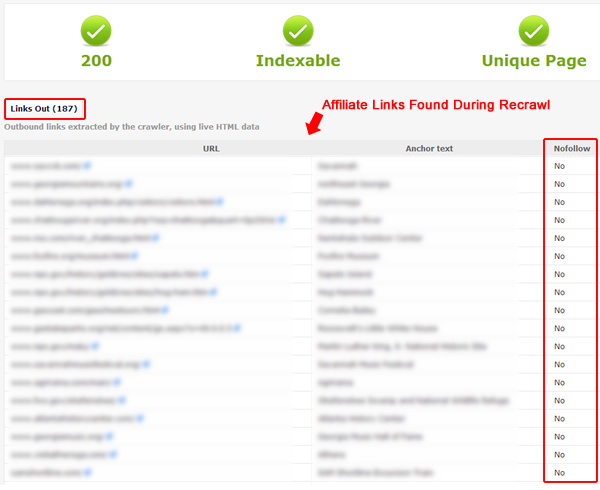

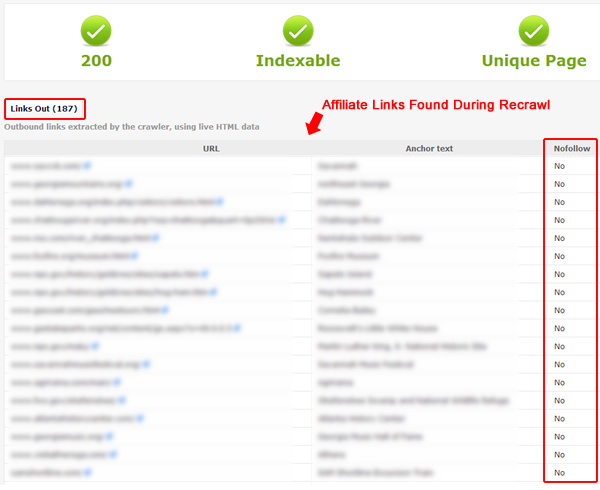

I was helping another large site with a serious Panda problem. The site had 1.5 million pages indexed, and earned most of its revenue via advertising.

Beyond advertising, there have been various affiliate marketing relationships developed and tested over the years. And having followed affiliate links on a website in a post-Penguin and post-Panda world is a dangerous proposition for sure.

As part of my initial recommendations, any instance of an affiliate link was evaluated to determine if it should remain, or if it should be nuked. From a removal standpoint, there were some older affiliate links generating almost no revenue, so it wasn’t even worth having them on the site. And for the affiliate links that remained, I recommended each be nofollowed as quickly as possible.

Now, this recommendation was part of a larger set of recommendations (the final audit deck was approximately 50 slides in PowerPoint). Therefore, I knew the changes would have to be checked and analyzed to ensure all recommendations were accurately implemented.

After recrawling the site, many of the affiliate links were taken care of. All looked good, until I noticed certain pages with a large number of followed external links. And this was a section that was somewhat buried on the site.

Upon manually checking those pages, I found a directory-like structure with many pages of followed affiliate links. Those links hadn’t been addressed, and to be honest, I’m pretty confident my client had little idea those pages were still active. Needless to say, we formed a plan for nofollowing all of the links as quickly as possible (although I recommended nuking the pages if they provided little value for users).

3. Twin Robots

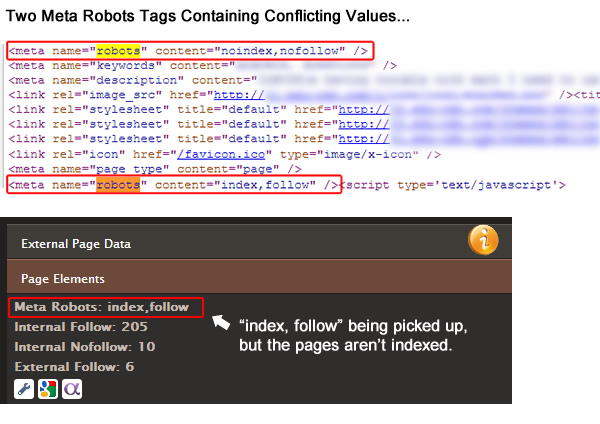

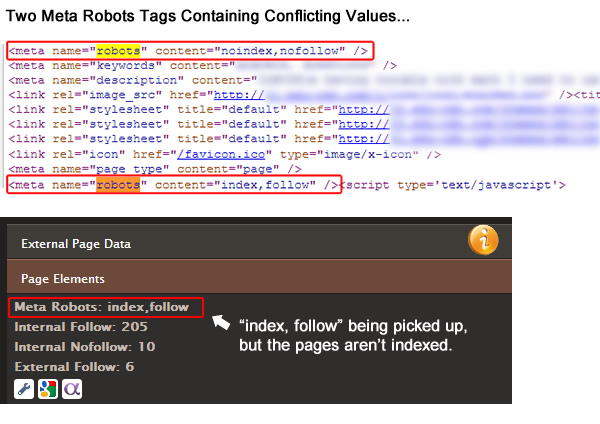

During audits, I keep a keen eye on how websites are using the meta robots tag. It never ceases to amaze me how often the robots tag gets botched. And when it’s botched, it can severely impact SEO.

During an audit of an ecommerce retailer, I noticed a number of pages that were not indexed, but they really should have been. So I recommended opening up those pages by removing the original meta robots tag which was using “noindex, nofollow”. The client quickly implemented those changes and was ready for a recrawl.

During the recrawl, I noticed many pages being flagged as “index, follow”, but the pages were still not being indexed properly. My recrawl reports all signaled that the meta robots tag was not the problem, based on the content values being used (“index, follow”). But there lies the problem.

My crawl reports and plugins were correct, but only for one instance of the meta robots tag. Unfortunately, two meta robots tags were added across many pages of the site. And worse, one robots tag said to index the page, while the other informed the engines to not index the page.

I don’t think a lot of people know this, but if the engines come across multiple robots tags, they will follow the most restrictive content values. So in this case, the engines won’t index the page (by following the most restrictive directive “noindex”).

Needless to say, I quickly informed my client of the problem, and the fix was easy. In addition, my client was able to check with their developers to ensure this wasn’t happening elsewhere on the site. Remember, at first glance, the meta robots tag looked fine. It was only when I dug deeper that I found the second robots tag (and the conflict).

4. Inheritance Causing Duplicate Titles and Metadata

While auditing websites, I also pay particular attention to content optimization. The last thing you want on any site, and especially a large-scale site, is a case of over-optimized titles, descriptions, headings, etc.

I’ve been helping a large publisher with Phantom and Panda problems, and have conducted several large audits on the site. In addition, I’ve performed surgical audits, digging deep into various sections of the site to find SEO problems.

Many changes have been implemented across the site over the past few months, and all looked good based on my checks. But, during a recent surgical audit of an important section of the site, I noticed some strange findings in the recrawl report. Many duplicate titles were being flagged throughout the section, when that never happened before.

Upon digging in manually, I noticed that many pages were affected by a technical glitch that was recently pushed to production. Many sub-pages within certain categories were taking on the titles and metadata from the top-level category page. This is problematic because:

- Unique and well-optimized titles are still very important. You don’t want the same top-level title on every page within a category (if those pages contain unique content that should be optimized properly). Those sub-pages could end up not ranking well for target queries.

- You definitely don’t want to send crazy duplicate signals to the engines in a post-Panda world. Having potentially thousands of pages, or tens of thousands, with the same exact metadata can be very problematic content quality-wise.

Upon picking up this problem, I immediately communicated the issues to my client’s team. The lead developer was literally solving the problem during our web conference (he knew exactly what the problem was as I was showing it to them). He planned to implement those changes that week.

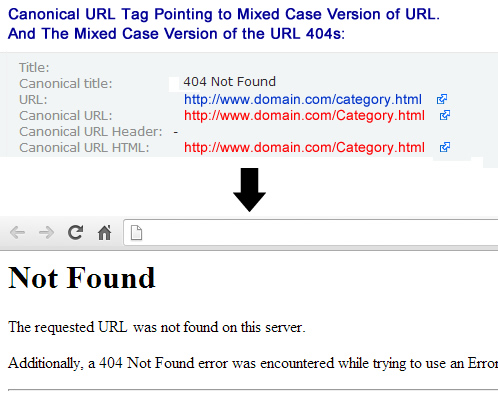

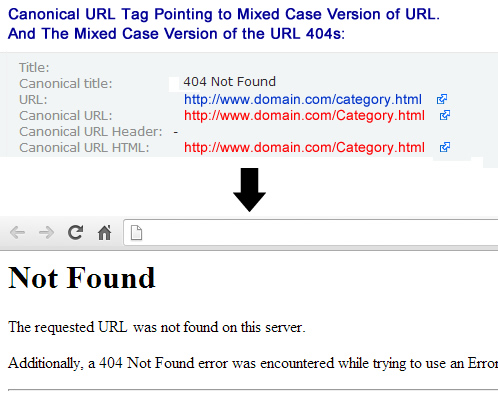

5. Rel Canonical and Mixed Case URLs That Bomb

Using the canonical URL tag can greatly help a site owner consolidate link equity and cut down on duplicate content. When used properly, you can tell the engines that all search equity should be passed to the canonical version of the URL. But similar to the meta robots tag, you have to be extremely careful when setting up the code. It’s one line of code… but the wrong implementation could cause serious SEO problems.

During an audit for an ecommerce retailer, I noticed an inconsistent use of the canonical URL tag across similar pages. Sometimes it was used, and other times it was completely left out.

To bring consistency to the site, and to help deal with potential duplicate content problems, I recommended implementing the canonical URL tag across all categories and product pages. The client moved quickly to implement the changes. And why wouldn’t they? It’s only one line of code, right?

But when checking the recrawl report, I noticed a strange format for the URL contained in the href of the canonical URL tag. The URLs were suddenly using mixed case, when the actual site URLs are lowercase (which they should be).

When visiting the URLs contained in the canonical URL tag, the pages 404. You would never know this by simply looking at the URLs in the code… But when you visited the pages, they all threw a 404 header response code, or Page Not Found.

Talk about a disastrous situation. Each page was telling the engines to pass all search equity to URLs that 404. For this ecommerce retailer, the botched rel=canonical situation could end up sending organic search traffic off a cliff. Luckily, the problem was picked up during my recrawl analysis, and my client was able to fix the canonical URL tag across all pages.

Next Steps/Advice

Based on the above examples, I hope you can see the power of the recrawl for long-term SEO success. Without thoroughly checking changes after an audit is produced, you run the risk of flawed implementations. And flawed implementations can make bad SEO situations even worse.

Based on my experience with audits, crawling, and recrawling, here are some recommendations:

- Assumptions Are The Mother of All SEO Problems: Don’t assume all changes will be implemented correctly. Schedule recrawls and subsequent analyses to make sure all changes are executed properly.

- Double and Triple Check with Devs: Make sure you follow up with the development team to make sure everyone clearly understands how to implement the changes (before they go off and implement them). It’s easy for everyone to yes you to death during the audit presentation, when there really might be some confusion. It’s safer to follow up with the dev team after the presentation to make sure technical changes are clear.

- The Power of Staging Servers: Try to use a staging server that’s password protected, or one that resides on your client’s network. This will enable you to safely push changes to the staging server, and then you can crawl and analyze those changes prior to releasing them to production. If not, you can push flawed code to production, which can quickly cause serious SEO problems.

- Know The Release Schedule: Know your release schedule, and get yourself on the tech email list. If you know when changes will be released to production, you can bother, I mean contact, those in charge about checking the changes.

- Document All Changes: Document all proposed changes, when they were completed, and your recrawls and subsequent crawl analyses. If you pick up problems during the recrawls, the documentation can help you determine when and why it happened. Then you can quickly correct the problems, while ensuring those issues don’t pop up again.

Summary – (Re)crawling To Success

Nothing is perfect in this world. When SEO changes are proposed, there will always be times that some changes are implemented incorrectly. And that’s OK, if you can hunt down and fix those gremlins before they cause bigger problems.

By recrawling changes before they hit your production server, you can keep your SEO platform as clean and strong as possible. A solid technical foundation is a prerequisite for strong SEO performance. Now go unleash the crawlers.