In May 2012, Google announced the Knowledge Graph which is designed to help users see factual summaries related to their search queries for such things as biographies of notable figures, tour dates for musicians, and the cast of movies.

The purpose of the Knowledge Graph according to Google is to help users:

- Find the right thing

- Get the best summary

- Go deeper and broader to discover more about the search

The Knowledge Graph is available worldwide now, provided the search is made in English.

With the Knowledge Graph, Google is trying to make search more intelligent. The results are more relevant because the search engine understands these entities, and the nuances in their meaning, in the same way the user does. This makes the search engine “think” more like the user.

How is the Knowledge Graph Transforming Google?

Google says:

“We’ve always believed that the perfect search engine should understand exactly what you mean and give you back exactly what you want. And we can now sometimes help answer your next question before you’ve asked it, because the facts we show are informed by what other people have searched for.”

Recently, Matt Cutts delivered the opening keynote for SES San Francisco (August 2012) where he clearly mentioned that one of the key focuses for Google is to move away from being a search engine and focus on becoming a knowledge engine. Google is so committed to this that Google’s Search Quality team has been renamed to Google’s Knowledge Team.

Google has been working hard on creating the vast database of structured knowledge that powers the features. Today, the Knowledge Graph database holds information about 500 million people, places, and things. More importantly, though, it also indexes over 3.5 billion defining attributes and connections between these items. Google’s algorithms have access to this structured data. And, importantly, so do you!

Google is also indexing structured data found in the form of schemas and microformats on websites, which it shares with the webmasters in Google Webmaster Tools.

Certainly this kind of an added dimension to search results will make the SEOs put on their thinking caps to churn out strategies to retain and improve search visibility for their websites.

How is the Knowledge Graph Transforming SEO?

The Knowledge Graph is also paving the way to new approaches toward SEO.

Semantic search isn’t just about the web. It’s about all information, data, and applications.

Data is the foundation on which such a web and search world can exist. Data in itself is meaningless, but when data gets linked because of its relationships with various data sets available on the web, it becomes useful and meaningful. So when users type in a query, these inter-connected relationships add context and the related information more powerful.

Content undoubtedly is the basis and the key to better results, but just adding content for the sake of adding content isn’t enough. The content needs to be correlated, connected, and shared via authority accounts on the web, such as on social media sites, to show it is valuable to users, which helps the website gain authority.

Further on, if this content is available in structured format then the probability of it being visible in the Knowledge Graph increases. Content expressed as microdata on the web page gets correlated easily to the data vocabulary it is giving information about, making it easy for the search engine find relevance and connectivity.

Google has stated that they only support a handful of these microdata types, including:

- Reviews

- People

- Products

- Businesses and organizations

- Recipes

- Events

- Music

- Video

If your website includes any of these types of content, you’re eligible for a microdata implementation, which will surely future proof your search presence.

In this new quality era of search we have to move our focus away from keywords and focus more on the keyness factor of the content which has the potential to correlate to searches made on the search engines as the semantic web adds more meaning to the query for a search rather than just mapping words during the search process.

(Note: I know there is no such word as “keyness” but by the “keyness factor” I mean the the correlation, relevance, and the essence of the content rather than the actual word to word keyword mapping.)

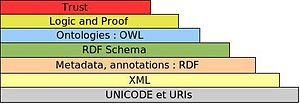

Image credit: Wikipedia

As you can see in the image above, we have URI and Unicode as the basis of the web. This can be called the first step which adds the keyness to the webpage and the content of that page that you want to promote organically on search engines or on the web.

The keyness factor can be derived from the context of the terms, terminolgy and text used in the content of URl, title, headings, anchor text of the inbound links, alt text used on images, schema codes, meta tags, microformats and body text.

If we focus on the keyness factor and try to cater to correlate to a wide range of keyword data rather than focusing on the keywords alone in the URLs, titles and descriptions it gets passed on to the next layer of the emerging web, making the pages pass on more correlation and relevance and also syncs the on page signals with the off-page signals which get directly indexed in the search engines in the form of feeds and sitemaps.

The second, third, and fourth layers have the XML, RDF and Schema content. The content that we mostly have in this format is the RSS feeds and sitemaps which are in XML and the contact details, videos, reviews, events,etc now are being represented more and more in the RDF format.

Focus on These Metrics

Search is moving away from word to word mapping to content relevance and correlation. It’s high time we moved on from quantitative metrics to qualitative metrics. This can be done by moving our focus from the keywords to the keyness of the content.

For that we have to focus on the following metrics rather than rankings alone to judge if are web presence is passing the test of each layer.

- Content Keywords: Which give and idea of keyword variance in the index and relevance

- Search Queries: Which give an idea about correlation

- Number of Impressions: Which give an idea about how well the search engine is able to combine and sync the on page signals with the off-page signals.

- Landing Pages: This gives us an idea about how well each URL is getting indexed along with proper relevance and the correlation factor.

- Click-Through Rate: This indicates the success factor of the on-page titles and descriptions of the landing page for that relevant search query.

Factors to Focus on When Writing & Sharing Content

- Content and Authorship Markup: The quality of the content and the authorship markup in the code of the page gives the identity to the writer or the brand. If the content meets the quality standards of Google and is represented using microformats then Google is able to extract the rich snippet for the author and other meta data used to represent schemas and microformats from the code of the page. This gives the content and the writer their due in SERPs.

- Connectivity: Tim Berners-Lee (The man who invented the World Wide Web and created the HTTP protocol) had once said that all data on the web – whether it is government, enterprise, or social data – is inter-linked and has relationships and all these signals are establishing your web presence. How well you refer to other sites having relevant content and how many sites refer back to the content considering it a valuable resource determine the inter-connectivity of data and documents. Other social signals like the shares, likes, +1s, etc. are a medium to quantify these signals.

- Relevance: Relevance is self-explanatory, but what needs to be kept in mind is that every piece of content is relevant to a certain specific topic so that it becomes the relevant landing page for the various permutations and combinations of the search queries related to that page or content. If the connectivity to the content and from the content is of relevance and topical it further adds to the relevance factor.

- Correlation: The content, connectivity, and the relevance of the content cause the correlation. And surely every SEO has heard the statement “Correlation is not causation.”

Some Points on Correlation & Causation

- Almost all SEO is correlative rather than causative.

- Moreover, a correlation need not be causative.

- You need a sizable chunk of data over a period of time (at least 4-6 months) in order to establish any connect between the correlation and causation to come to any onclusion.

- This correlation analysis requires a lot of time, effort, and an eye for detail by an expert.

- Jumping to quick conclusions will just cause more confusion.

Authority, Security & Trust

- Authority: The authorship markup gives the content writer or brand an identity but if the content is shared by multiple users and authority accounts of that relevant industry then it adds to the goodwill and generates an authority factor. The social signals by way of retweets, likes, +1s, user-generated content in the form of comments, etc. add the magnitude to the signals. The quantification of social signals is still at the initial stages as the social and search integration is also still at the budding stage. But, the authority factor is one of the key quality factors to determine a site as a quality site as mentioned on this article

- Security: If the site safeguards the user’s privacy and the user feels confident in sharing the data asked in the form or feels confident using his credit card for payment then it adds on to the trust factor and this factor has the potential for making a first time user a regular visitor or online customer.

- Trust: Trust is the end result of all these factors in action. Trust is the emotional and logical aspect of the users decision to refer and recommend the site and content if he has been getting valuable information from the site.

It All Starts With Content

If you create content using high quality standards and it has in-depth information about a topic, then it will be shared by people and referred by other sites, which will add to the connectivity and relevance. If such content has the added dimension of the security layer, then it has the potential to have a high authority and trust factor – making it a valuable resource Google will be unable to ignore.

Please share your views on what the Knowledge Graph means to the future of search and SEO in the comments below.

Leave a Reply

You must be logged in to post a comment.