In the past few months Google has really upped the game in terms of manually and algorithmically adjusting for what they deem to be artificially inflated or “over-optimized” online marketing tactics. Such updates and events include Panda, the notice of death detection and a Penguin web spam algorithmic update.

Sweet. So while SEOs and webmasters this side of China are busy analyzing what might constitute over-optimization, what an [under|below|sub-par|sans|zero] optimized site might look like (ladies and gentlemen – your obligatory keyword stuffing joke!) – and if it might offer any insights.

The “Control” Variable

We have a client (who shall henceforth be known as Client Site), for whom we manage their display advertising. It’s a very old site. Thirteen-odd years old in fact; and during this time the site has published content of the same style regularly.

This website has great authority in its sector, a .co.uk exact-match-domain with high traffic and page impressions, a whack-load of data, and huge number of Page 1 rankings, including a few No. 1’s for its core terms. The site is in a niche within the women’s lifestyle sector and evolved from a labor of love into a commercial concern being monetized with display advertising.

Nobody has ever solicited a single link to it! All of the sites’ backlinks are there because another party chose to put them there.

While we may all be fretting over what makes a great anchor-text mix, home to deep-page linking ratio, PR spread, or whatever-the-hell-else, it might be interesting to see what a lack of interference looks like in one single case.

Domain/Sub-domain Link Spread

The flow of authority passes through a link (amongst a bunch of other things), however not all links are equal. If a link points to http://clientsite.co.uk, that is then distinct from another link which points to the subdomain http://www.clientsite.co.uk thus diluting equity potential. Perhaps search engines are smart enough to transfer equity to a preferred URL?

Certainly you can select your preferred URL using Google Webmaster Tools. Other solutions are to 301 non-preferred URLs to the preferred URLs or to canonise the preferred URL.

All this aside, with an informed link building strategy any link builder will actively seek to obtain links to the preferred URL particularly given that a 301 redirect doesn’t pass the full “juice”.

This data is from a Majestic Standard Report type. Yes, there are 301s in place but notice the chunky spread of links across these three. All links are occurring in the wild and of course those “linkers” have no idea what the preferred URL is.

Anchor Text

In previous years anchor text (i.e. anchor text including or exactly matching the ranking term) has long been considered a strong ranking factor. In recent times some case studies have shown this not to be the case. Conversely in some sectors we may still see sites ranking well using aggressive anchor text engineering even if they may not stay around for long; however many currently agree that high levels of “money term” anchor texts being downplayed seem to be a feature of Google’s recent changes.

So what do the top anchors look for Client Site?

Using top 10 unique anchors using www.linkresearchtools.com (which constitute 59.4 percent of all anchors), inputted to Wordle the same number of times the anchor occurs.

As an aside, I’d recommend this post from Majestic as background reading for those new to anchor text analysis and looking for the best ways to visualize this data. Personally I love a good tag/word cloud, as do they.

Domain Diversity

The more diverse a backlink profile the more authoritative it may be thought to be; as to be diverse would mean less “sitewide” linking (i.e., links that appear on every page of a website) and such. Plus it wouldn’t make sense to multiply a links’ value according to the number of instances it may occur sitewide (and equity characteristics thereof). It simply doesn’t fit with the “vote” analogy that many may be familiar with.

Client Site is far from diverse with only 24 percent diversity. I would urge caution here in that I’m in no way saying that sitewide links are natural and awesome as there are so many other factors in addition that are considered, such as the context of these links to Client Site and position on page to name a couple.

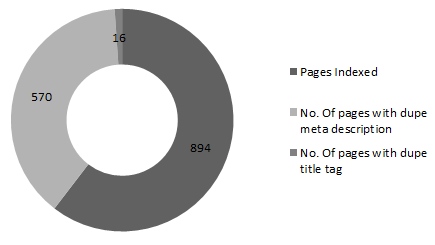

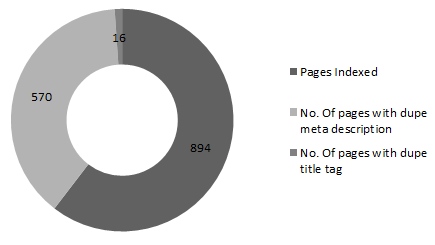

Title Tag, Meta Description Duplication

Just thought I’d add in a bit of “on-page” SEO for good measure! This data comes from the site Webmaster Tools.

While keyword stuffing your title and descriptions is never a good idea, it is generally best practice to distinguish these meta-data values. After all, this data describes each page in summary and we’re warned time and again that substantially similar or duplicate content offers little to no value to users.

Conclusion

While I really want to stress that there should be no sweeping generalizations or broad conclusions to be taken from this single site example, the purpose was simply to share a few features of a website that is a regular natural beauty.

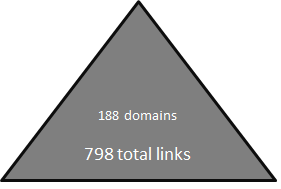

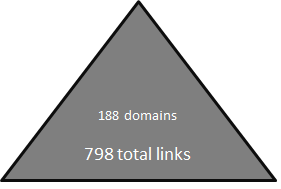

What we might infer in this case is that domain age, content relevance, quality and trust, and the geo-specific top-level domain (TLD) seem to be holding this site where it remains. Additionally, this case adds weight to arguments about keyword anchor text engineering being something to avoid. Plus, with only 188 unique links, we might infer that quality has a quantity.

Leave a Reply

You must be logged in to post a comment.